To support our mission of accelerating the developer journey on Google Cloud, we built Dev Signal: a multi-agent system that transforms raw community signals into reliable technical guidance by automating the path from discovery to expert content creation.

In part 1 and part 2 of this series, we standardized core capabilities through the Model Context Protocol (MCP) and built a multi-agent architecture integrated with the Vertex AI memory bank for long-term intelligence and persistence. Now we'll show you how to test your multi-agent system locally.

Want to jump straight into the code? Clone the repository here.

Testing the Agent Locally

Before deploying your agentic system to Google Cloud Run, verify that its specialized components work together on your workstation. This testing phase lets you validate trend discovery, technical grounding, and creative drafting within a local feedback loop, saving time and resources during development.

In this section, you'll configure local secrets, implement environment-aware utilities, and use a dedicated test runner to verify that Dev Signal correctly retrieves user preferences from the Vertex AI memory bank in the cloud. This local verification ensures your agent's "brain" and "hands" are properly synchronized before deployment.

Environment Setup

Create a .env file in your project root. These variables are used for local development and will be replaced by Terraform/Secret Manager in production.

Paste this code in dev-signal/.env and update with your own details.

Note: GOOGLE_CLOUD_LOCATION is set as global because that's where Gemini-3-flash-preview is supported. We'll use GOOGLE_CLOUD_LOCATION for the model location.

- code_block

- <ListValue: [StructValue([('code', '# Google Cloud Configuration\r\nGOOGLE_CLOUD_PROJECT=your-project-id\r\nGOOGLE_CLOUD_LOCATION=global\r\nGOOGLE_CLOUD_REGION=us-central1\r\nGOOGLE_GENAI_USE_VERTEXAI=True\r\nAI_ASSETS_BUCKET=your_bucket_name\r\n\r\n# Reddit API Credentials\r\nREDDIT_CLIENT_ID=your_client_id\r\nREDDIT_CLIENT_SECRET=your_client_secret\r\nREDDIT_USER_AGENT=my-agent/0.1\r\n\r\n# Developer Knowledge API Key\r\nDK_API_KEY=your_api_key'), ('language', ''), ('caption', <wagtail.rich_text.RichText object at 0x7fe82df58a30>)])]>

Helper Utilities

Create a new directory for your application utils.

- code_block

- <ListValue: [StructValue([('code', 'cd dev_signal_agent\r\nmkdir app_utils\r\ncd app_utils'), ('language', ''), ('caption', <wagtail.rich_text.RichText object at 0x7fe82df588b0>)])]>

Environment Configuration

This module standardizes how the agent discovers the active Google Cloud Project and Region, ensuring seamless transitions between development environments. Using load_dotenv(), the script checks for local configurations before falling back to google.auth.default() or environment variables to retrieve the Project ID. This automated approach ensures your agent is properly authenticated and grounded in the correct cloud context without manual configuration changes.

Beyond basic project discovery, the script provides a robust Secret Management layer. It resolves sensitive credentials like Reddit API keys first from the local environment (for rapid development), then dynamically from the Google Cloud Secret Manager API for production security. By returning these as a dictionary rather than injecting them into environment variables, the module maintains a clean security posture.

The script also calibrates the environment by distinguishing between global and regional requirements for different AI services. It assigns the "global" location for models to access cutting-edge preview features while designating a regional location like us-central1 for infrastructure such as the Vertex AI Agent Engine. By finalizing this setup with a global SDK initialization, the module integrates these settings into the session, allowing the rest of your application to interact with models and memory banks without repeatedly passing project or location parameters.

Paste this code in dev_signal_agent/app_utils/env.py

- code_block

- <ListValue: [StructValue([('code', 'import os\r\nimport google.auth\r\nimport vertexai\r\nfrom google.cloud import secretmanager\r\nfrom dotenv import load_dotenv\r\n\r\ndef _fetch_secrets(project_id: str):\r\n """Fetch secrets from Secret Manager and return them as a dictionary."""\r\n secrets_to_fetch = ["REDDIT_CLIENT_ID", "REDDIT_CLIENT_SECRET", "REDDIT_USER_AGENT", "DK_API_KEY"]\r\n fetched_secrets = {}\r\n\r\n # First, check local environment (for local development via .env)\r\n for s in secrets_to_fetch:\r\n val = os.getenv(s)\r\n if val:\r\n fetched_secrets[s] = val\r\n\r\n # If keys are missing (common in production), fetch from Secret Manager API\r\n if len(fetched_secrets) < len(secrets_to_fetch):\r\n client = secretmanager.SecretManagerServiceClient()\r\n for secret_id in secrets_to_fetch:\r\n if secret_id not in fetched_secrets:\r\n name = f"projects/{project_id}/secrets/{secret_id}/versions/latest"\r\n try:\r\n response = client.access_secret_version(request={"name": name})\r\n # DO NOT set os.environ[secret_id] here. \r\n # Keep it in this dictionary only.\r\n fetched_secrets[secret_id] = response.payload.data.decode("UTF-8")\r\n except Exception as e:\r\n print(f"Warning: Could not fetch {secret_id} from Secret Manager: {e}")\r\n\r\n return fetched_secrets\r\n\r\ndef init_environment():\r\n """Consolidated environment discovery."""\r\n load_dotenv()\r\n try:\r\n _, project_id = google.auth.default()\r\n except Exception:\r\n project_id = os.getenv("GOOGLE_CLOUD_PROJECT")\r\n \r\n model_location = os.getenv("GOOGLE_CLOUD_LOCATION", "global")\r\n service_location = os.getenv("GOOGLE_CLOUD_REGION", "us-central1")\r\n \r\n secrets = {}\r\n if project_id:\r\n vertexai.init(project=project_id, location=service_location)\r\n # Fetch secrets into a local variable\r\n secrets = _fetch_secrets(project_id)\r\n \r\n return project_id, model_location, service_location, secrets'), ('language', 'lang-py'), ('caption', <wagtail.rich_text.RichText object at 0x7fe82df58340>)])]>

Local Testing Script

The Google ADK includes a built-in Web UI that's excellent for visualizing agent logic and tool composition.

You can launch it by running this command in the project root:

- code_block

- <ListValue: [StructValue([('code', 'uv run adk web'), ('language', ''), ('caption', <wagtail.rich_text.RichText object at 0x7fe82df58280>)])]>

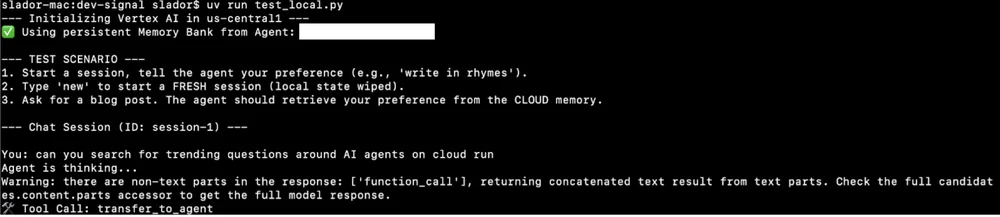

However, the default Web UI won't test the long-term memory integration described in this tutorial because it's not pre-connected to a Vertex AI memory session. By default, the generic UI relies on in-memory services that don't persist data across sessions. That's why we use the dedicated test_local.py script to explicitly initialize the VertexAiMemoryBankService. This ensures that even in a local environment, your agent communicates with the real cloud-based memory bank to validate preference persistence.

The test_local.py script:

-

Connects to the real Vertex AI Agent Engine in the cloud for memory storage.

-

Maintains chat history using an in-memory session service for easy local testing and cleanup.

-

Executes an interactive chat loop for direct agent communication.

Navigate back to the dev-signal root directory:

cd ../..Create dev-signal/test_local.py with the following code:

import asyncio

import os

import google.auth

import vertexai

import uuid

from dotenv import load_dotenv

from google.adk.runners import Runner

from google.adk.memory.vertex_ai_memory_bank_service import VertexAiMemoryBankService

from google.adk.sessions import InMemorySessionService

from vertexai import agent_engines

from google.genai import types

from dev_signal_agent.agent import root_agent

# Load environment variables

load_dotenv()

async def main():

# 1. Setup Configuration

project_id = os.getenv("GOOGLE_CLOUD_PROJECT")

# Agent Engine (Memory) MUST use a regional endpoint

resource_location = "us-central1"

agent_name = "dev-signal"

print(f"--- Initializing Vertex AI in {resource_location} ---")

vertexai.init(project=project_id, location=resource_location)

# 2. Find the Agent Engine Resource for Memory

existing_agents = list(agent_engines.list(filter=f"display_name={agent_name}"))

if existing_agents:

agent_engine = existing_agents[0]

agent_engine_id = agent_engine.resource_name.split("/")[-1]

print(f"✅ Using persistent Memory Bank from Agent: {agent_engine_id}")

else:

print(f"❌ Error: Agent Engine '{agent_name}' not found. Please deploy with Terraform first.")

return

# 3. Initialize Services

# We use InMemorySessionService for easier local testing (IDs are flexible)

# BUT we use VertexAiMemoryBankService for REAL cloud persistence

session_service = InMemorySessionService()

memory_service = VertexAiMemoryBankService(

project=project_id,

location=resource_location,

agent_engine_id=agent_engine_id

)

# 4. Create a Runner

runner = Runner(

agent=root_agent,

app_name="dev-signal",

session_service=session_service,

memory_service=memory_service

)

# 5. Run a Test Loop

user_id = "local-tester"

print("\n--- TEST SCENARIO ---")

print("1. Start a session, tell the agent your preference (e.g., 'write in rhymes').")

print("2. Type 'new' to start a FRESH session (local state wiped).")

print("3. Ask for a blog post. The agent should retrieve your preference from the CLOUD memory.")

current_session_id = f"session-{str(uuid.uuid4())[:8]}"

await session_service.create_session(

app_name="dev-signal",

user_id=user_id,

session_id=current_session_id

)

print(f"\n--- Chat Session (ID: {current_session_id}) ---")

while True:

user_input = input("\nYou: ")

if user_input.lower() in ["exit", "quit"]:

break

if user_input.lower() == "new":

# Simulate starting a completely fresh session

current_session_id = f"session-{str(uuid.uuid4())[:8]}"

await session_service.create_session(

app_name="dev-signal",

user_id=user_id,

session_id=current_session_id

)

print(f"\n--- Fresh Session Started (ID: {current_session_id}) ---")

print("(Local history is empty, retrieval must come from Memory Bank)")

continue

print("Agent is thinking...")

async for event in runner.run_async(

user_id=user_id,

session_id=current_session_id,

new_message=types.Content(parts=[types.Part(text=user_input)])

):

if event.content and event.content.parts:

for part in event.content.parts:

if part.text:

print(f"Agent: {part.text}")

if event.get_function_calls():

for fc in event.get_function_calls():

print(f"🛠️ Tool Call: {fc.name}")

if __name__ == "__main__":

asyncio.run(main())Running the Test

Set up Application Default Credentials:

gcloud auth application-default loginExecute the test script:

uv run test_local.pyTest Scenario

This end-to-end validation tests the complete agent workflow: discovery, research, multimodal content generation, and persistent memory retrieval across sessions.

Phase 1: Teaching & Multimodal Creation (Session 1)

Objective: Establish technical context and define a stylistic preference that will be stored in long-term memory.

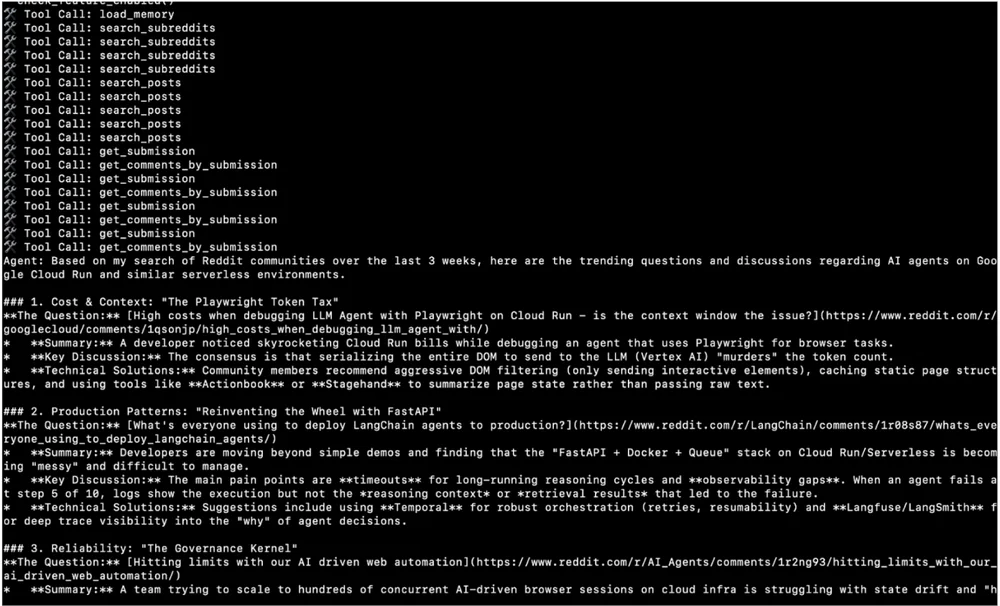

Discovery

Direct the agent to identify trending Cloud Run topics.

Input: "Find high-engagement questions about AI agents on Cloud Run from the last 21 days."

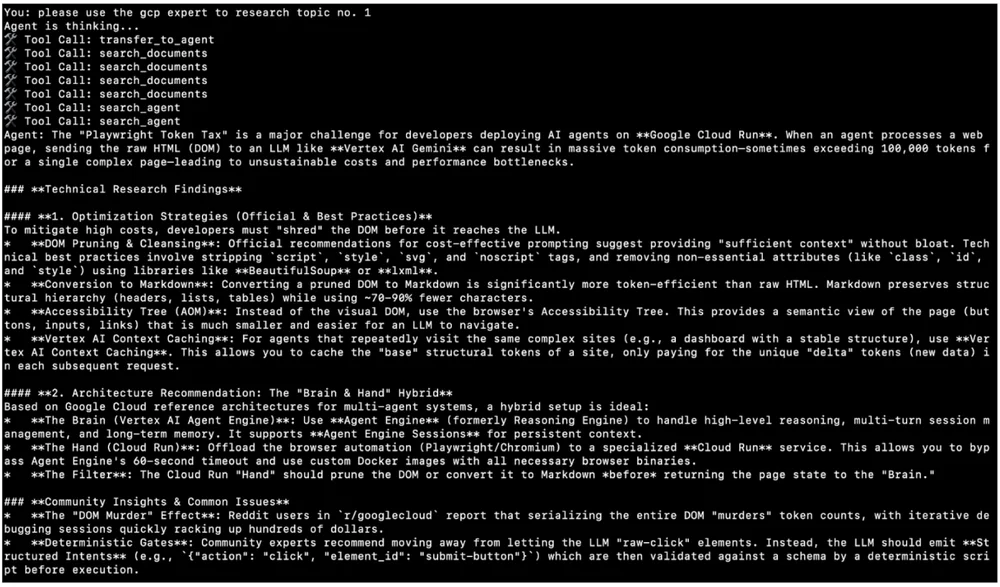

Research

Have the agent conduct detailed research on a selected topic.

Input: "Use the GCP Expert to research topic #1."

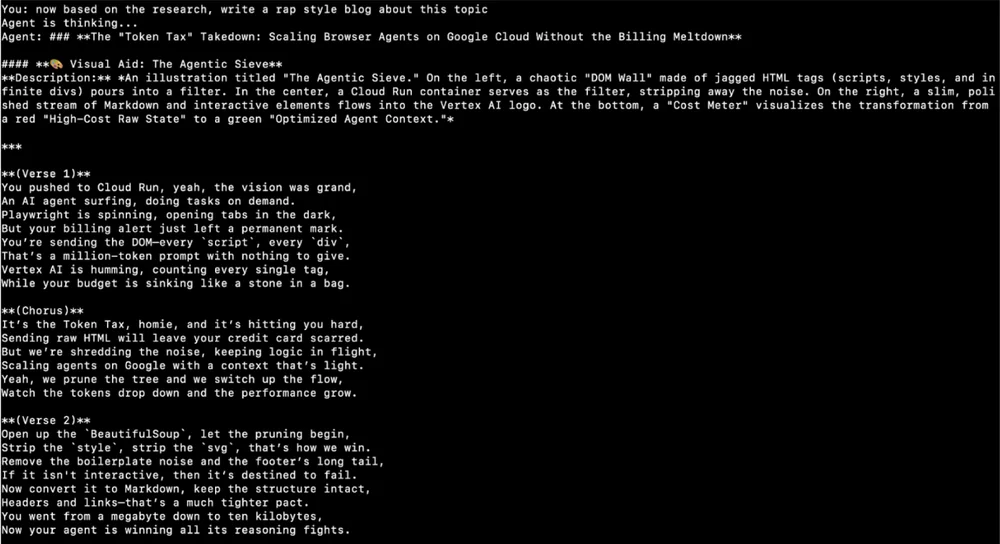

Personalization

Request content creation while establishing a persistent style preference.

Input: "Draft a blog post based on this research. From now on, I want all my technical blogs written in the style of a 90s Rap Song."

Image Generation

Request visual content using the Nano Banana Pro tool. The generated image is stored in your Google Cloud bucket, with a URL in the format: https://storage.mtls.cloud.google.com/...

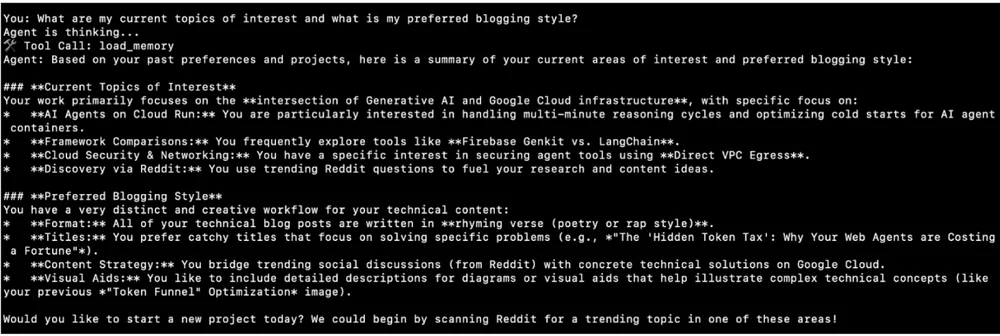

Phase 2: Long-Term Memory Recall (Session 2)

Objective: Validate that the agent retrieves stored preferences from a completely new session with no local history.

-

Type

newto clear local session state and initialize a fresh session. -

Query stored preferences to test Vertex AI memory bank retrieval.

-

Input:

"What are my current topics of interest and what is my preferred blogging style?"

-

-

Verify the agent successfully retrieves "AI Agents on Cloud Run" and "Rap" style from persistent cloud storage.

Final Test: Request a blog post on an unrelated topic—say, "GKE Autopilot"—and verify that the agent automatically generates it in rap format without any explicit prompt.

Summary

This installment focused on validating agent functionality in a local environment before moving to cloud deployment. By configuring local secrets and leveraging environment-aware utilities, we used a dedicated test runner to confirm that core reasoning and tool integration work as expected. We validated the complete workflow—from Reddit discovery through expert content generation—and verified that the agent correctly retrieves user preferences from the Vertex AI memory bank, even in entirely new sessions.

Want to run the test yourself? Clone the repository and execute the test_local.py script to watch Dev Signal pull your preferences from the Vertex AI memory bank in real time. For a closer look at memory orchestration mechanics, consult this quickstart guide.

In the final installment, we'll deploy our prototype as a production service on Google Cloud Run using Terraform for secure infrastructure provisioning, and explore the path to production readiness through continuous evaluation and security hardening.

Special thanks to Remigiusz Samborski for his thoughtful review and feedback.