The Go Blog

Flight Recorder in Go 1.25

Last year we unveiled enhanced Go execution traces, including a preview of flight recording capabilities. With Go 1.25, flight recording is production-ready and adds a powerful diagnostic option to your toolkit.

Execution traces

Go's runtime can log events during program execution, creating what we call a runtime execution trace. These traces capture detailed information about goroutine behavior and system interactions, making them invaluable for debugging latency problems by showing both when goroutines execute and when they're blocked.

The runtime/trace package lets you collect traces over specific time windows using runtime/trace.Start and runtime/trace.Stop. This approach works well for tests, benchmarks, and CLI tools where you can trace complete executions or targeted segments.

Long-running services present a different challenge. Web servers may run for days or weeks, making full execution traces impractically large. Problems often surface unexpectedly—a timeout, a failed health check—when it's too late to start tracing.

Random sampling across a fleet can help identify issues proactively, but requires substantial infrastructure for storing, triaging, and processing large volumes of trace data, most of which won't reveal anything useful. When investigating a specific problem, you need a more targeted solution.

Flight recording

The flight recorder solves this by continuously buffering recent execution history in memory. When your program detects a problem, you can snapshot the last few seconds of trace data—capturing the exact window where the issue occurred.

Rather than writing traces to disk or network, the flight recorder maintains a rolling buffer. You can extract this buffer at any moment to examine the precise timeframe leading up to a detected problem.

Example

Let's use the flight recorder to diagnose a performance issue in an HTTP server implementing a number-guessing game. The server exposes a /guess-number endpoint and runs a background goroutine that reports guess statistics every minute.

// bucket is a simple mutex-protected counter.

type bucket struct {

mu sync.Mutex

guesses int

}

func main() {

// Make one bucket for each valid number a client could guess.

// The HTTP handler will look up the guessed number in buckets by

// using the number as an index into the slice.

buckets := make([]bucket, 100)

// Every minute, we send a report of how many times each number was guessed.

go func() {

for range time.Tick(1 * time.Minute) {

sendReport(buckets)

}

}()

// Choose the number to be guessed.

answer := rand.Intn(len(buckets))

http.HandleFunc("/guess-number", func(w http.ResponseWriter, r *http.Request) {

start := time.Now()

// Fetch the number from the URL query variable "guess" and convert it

// to an integer. Then, validate it.

guess, err := strconv.Atoi(r.URL.Query().Get("guess"))

if err != nil || !(0 <= guess && guess < len(buckets)) {

http.Error(w, "invalid 'guess' value", http.StatusBadRequest)

return

}

// Select the appropriate bucket and safely increment its value.

b := &buckets[guess]

b.mu.Lock()

b.guesses++

b.mu.Unlock()

// Respond to the client with the guess and whether it was correct.

fmt.Fprintf(w, "guess: %d, correct: %t", guess, guess == answer)

log.Printf("HTTP request: endpoint=/guess-number guess=%d duration=%s", guess, time.Since(start))

})

log.Fatal(http.ListenAndServe(":8090", nil))

}

// sendReport posts the current state of buckets to a remote service.

func sendReport(buckets []bucket) {

counts := make([]int, len(buckets))

for index := range buckets {

b := &buckets[index]

b.mu.Lock()

defer b.mu.Unlock()

counts[index] = b.guesses

}

// Marshal the report data into a JSON payload.

b, err := json.Marshal(counts)

if err != nil {

log.Printf("failed to marshal report data: error=%s", err)

return

}

url := "http://localhost:8091/guess-number-report"

if _, err := http.Post(url, "application/json", bytes.NewReader(b)); err != nil {

log.Printf("failed to send report: %s", err)

}

}

Full server code: https://go.dev/play/p/rX1eyKtVglF. Simple client: https://go.dev/play/p/2PjQ-1ORPiw.

After deployment, users report that some /guess-number requests take unexpectedly long. The logs confirm occasional response times exceeding 100 milliseconds, while most complete in microseconds:

2025/09/19 16:52:02 HTTP request: endpoint=/guess-number guess=69 duration=625ns

2025/09/19 16:52:02 HTTP request: endpoint=/guess-number guess=62 duration=458ns

2025/09/19 16:52:02 HTTP request: endpoint=/guess-number guess=42 duration=1.417µs

2025/09/19 16:52:02 HTTP request: endpoint=/guess-number guess=86 duration=115.186167ms

2025/09/19 16:52:02 HTTP request: endpoint=/guess-number guess=0 duration=127.993375ms

Can you spot the issue? Either way, let's use the flight recorder to capture what happens before a slow response.

First, configure and start the flight recorder in main:

// Set up the flight recorder

fr := trace.NewFlightRecorder(trace.FlightRecorderConfig{

MinAge: 200 * time.Millisecond,

MaxBytes: 1 << 20, // 1 MiB

})

fr.Start()

MinAge sets how long trace data is retained—we recommend roughly 2x your event window. For a 5-second timeout, use 10 seconds. MaxBytes caps memory usage; expect a few MB per second of execution, or around 10 MB/s for busy services.

Add a helper to capture and save snapshots:

var once sync.Once

// captureSnapshot captures a flight recorder snapshot.

func captureSnapshot(fr *trace.FlightRecorder) {

// once.Do ensures that the provided function is executed only once.

once.Do(func() {

f, err := os.Create("snapshot.trace")

if err != nil {

log.Printf("opening snapshot file %s failed: %s", f.Name(), err)

return

}

defer f.Close() // ignore error

// WriteTo writes the flight recorder data to the provided io.Writer.

_, err = fr.WriteTo(f)

if err != nil {

log.Printf("writing snapshot to file %s failed: %s", f.Name(), err)

return

}

// Stop the flight recorder after the snapshot has been taken.

fr.Stop()

log.Printf("captured a flight recorder snapshot to %s", f.Name())

})

}

Before logging each completed request, trigger a snapshot if the request exceeded 100 milliseconds:

// Capture a snapshot if the response takes more than 100ms.

// Only the first call has any effect.

if fr.Enabled() && time.Since(start) > 100*time.Millisecond {

go captureSnapshot(fr)

}

Instrumented server code: https://go.dev/play/p/3V33gfIpmjG

Run the server and send requests until a slow response triggers a snapshot.

Analyze the trace using go tool trace. Run go tool trace snapshot.trace to start a local web server, then open the displayed URL.

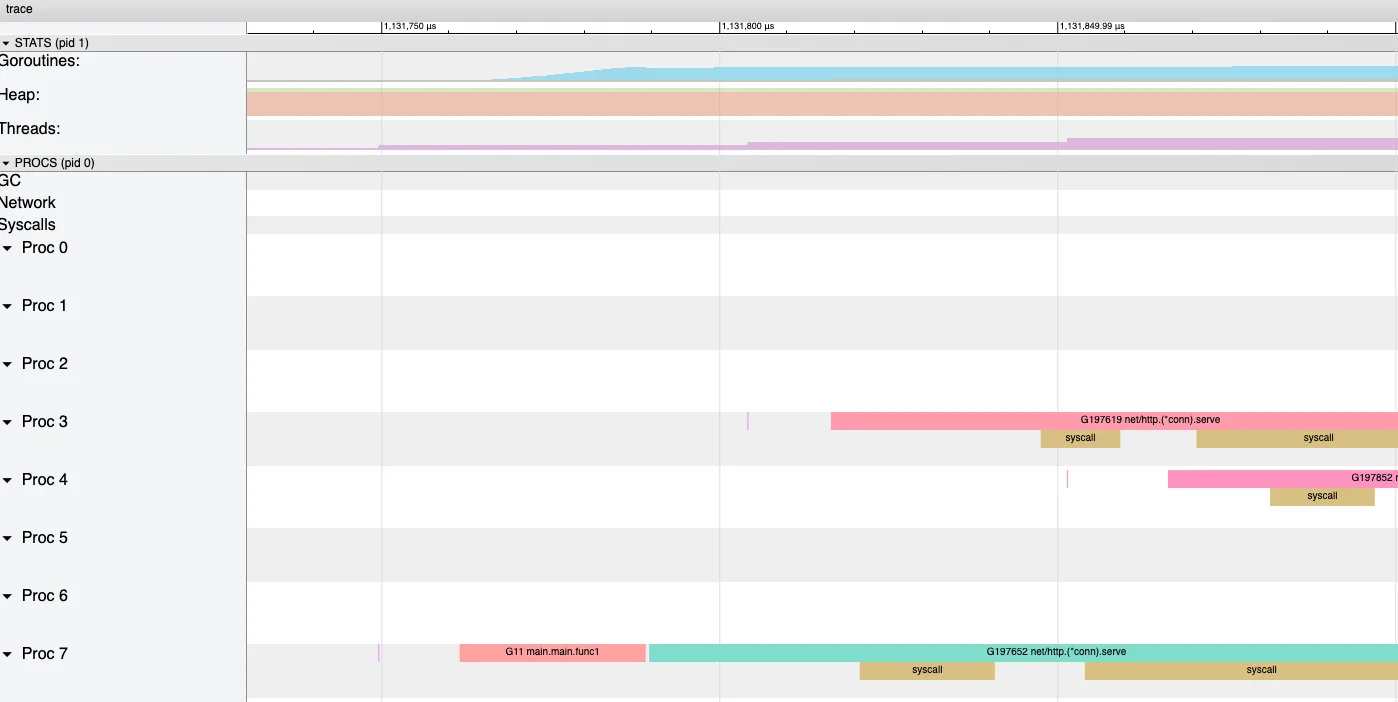

Click "View trace by proc" to visualize the timeline. The "STATS" section shows application state including thread count, heap size, and goroutine count. The "PROCS" section displays how goroutines map onto GOMAXPROCS threads, showing when each goroutine starts, runs, and stops.

Notice the large execution gap on the right—roughly 100ms where nothing happens:

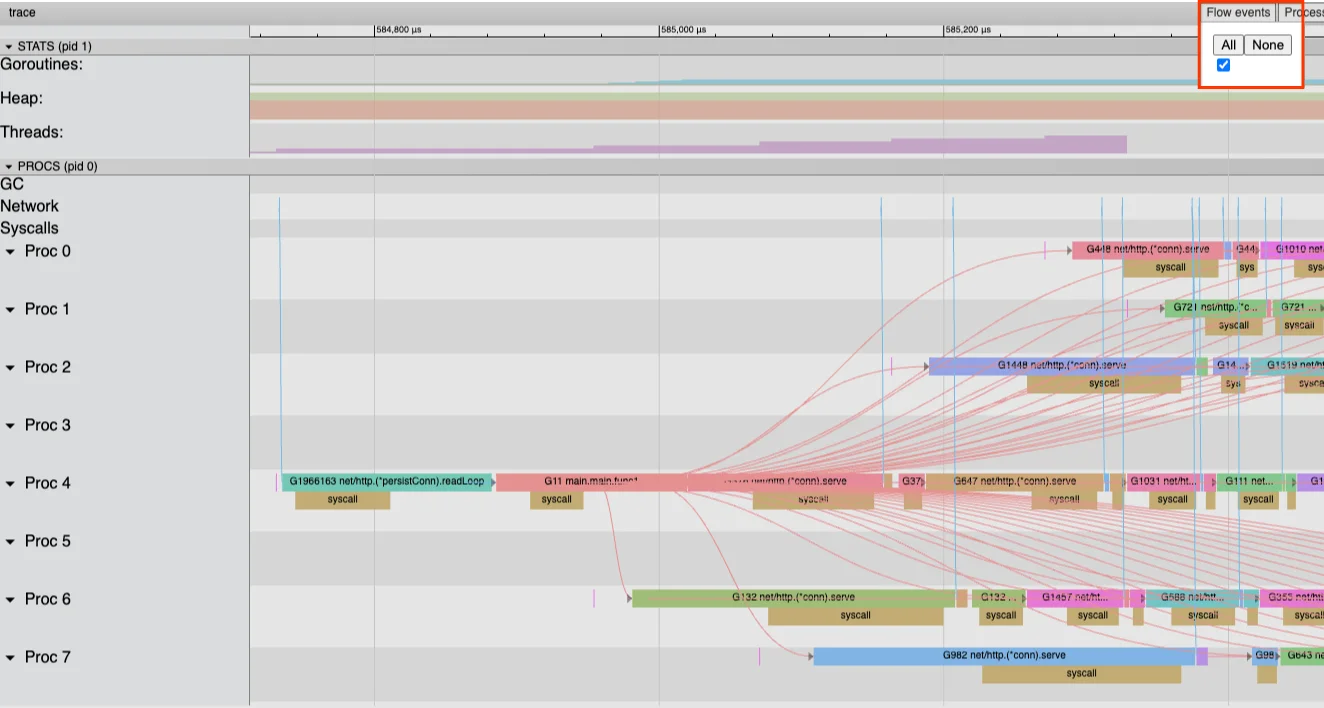

Use the zoom tool (or press 3) to examine the trace section immediately after the gap:

Beyond individual goroutine activity, "flow events" show goroutine interactions. Incoming flows indicate what triggered a goroutine to run; outgoing flows show effects on other goroutines. Enabling flow event visualization often reveals the problem's source:

The visualization reveals that numerous goroutines connect directly to a single goroutine immediately following the activity gap.

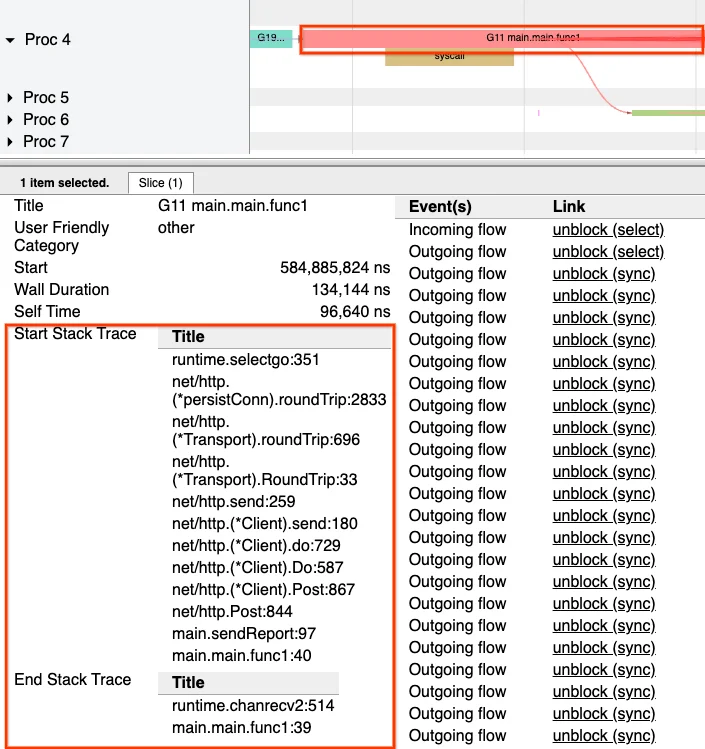

Selecting this particular goroutine displays an event table populated with outgoing flow events, consistent with the earlier flow view observations.

What occurred during this goroutine's execution? The trace captures stack trace snapshots at various points. Examining the goroutine reveals that the initial stack trace shows it awaiting HTTP request completion when scheduled to run. The final stack trace indicates that sendReport had already returned, with the goroutine now waiting for the ticker to trigger the next scheduled report transmission.

During this goroutine's execution window, numerous "outgoing flows" appear, representing interactions with other goroutines. Selecting any Outgoing flow entry navigates to a detailed view of that specific interaction.

This flow points to the Unlock operation within sendReport:

for index := range buckets {

b := &buckets[index]

b.mu.Lock()

defer b.mu.Unlock()

counts[index] = b.guesses

}

The intention in sendReport was to lock each bucket, copy its value, then immediately release the lock.

The actual behavior differs: the lock isn't released right after copying bucket.guesses. Using defer to release the lock delays execution until function return. The lock remains held beyond the loop's end—even throughout the HTTP request. This subtle bug can prove challenging to identify in large-scale production environments.

Execution tracing successfully identified the root cause. Without flight-recording mode, however, running the execution tracer on a long-lived server would generate massive trace data volumes requiring storage, transmission, and analysis. Flight recording provides retrospective analysis capability—capturing only the problematic behavior after it occurs, enabling rapid diagnosis.

Flight recording represents the newest addition to Go's diagnostic toolkit for analyzing running applications. Recent releases have brought steady tracing improvements. Go 1.21 significantly reduced tracing runtime overhead. Go 1.22 introduced a more robust, splittable trace format that enabled features like flight recording. Community tools such as gotraceui and the upcoming programmatic trace parsing capability expand execution trace utility. The Diagnostics page catalogs additional available tools. We encourage you to leverage these resources when developing and optimizing your Go applications.

Thanks

We'd like to take a moment to thank those community members who have been active in the diagnostics meetings, contributed to the designs, and provided feedback over the years: Felix Geisendörfer (@felixge.de), Nick Ripley (@nsrip-dd), Rhys Hiltner (@rhysh), Dominik Honnef (@dominikh), Bryan Boreham (@bboreham), and PJ Malloy (@thepudds).

The discussions, feedback, and work you've all put in have been instrumental in pushing us to a better diagnostics future. Thank you!