The current state of AI across the enterprise landscape

Enterprise AI is evolving rapidly. Organizations are moving beyond basic chat interfaces and code assistants toward autonomous AI agents capable of reading data, interacting with tools, and making independent decisions. According to Microsoft's 2025 Work Trend Index Annual Report, 81% of business leaders expect to integrate agents into their AI strategy within 12 to 18 months, while 24% have already deployed AI organization-wide.

This acceleration brings significant infrastructure challenges. The 2025 HashiCorp Cloud Complexity Report reveals that 97% of organizations juggle multiple tools to manage cloud environments, and 73% report that platform engineering and security teams operate in silos. As AI adoption accelerates, this fragmentation creates compounding layers of complexity and risk.

AI and agentic workflows

Traditional identity and access management systems were built for humans. They rely on predictable behavior patterns and role-based access controls that define which resources a user can touch. These systems assume defined, repeatable workflows.

AI agents break this model. They operate autonomously across databases, APIs, and tools. They can invoke other agents, creating execution chains that shift dynamically from one run to the next. This autonomy delivers tremendous value, but it also introduces security risks that static IAM frameworks can't address.

Scale compounds the problem. Gartner reports that machine-to-human identities are growing at a 45:1 ratio. Each new agent introduces a new identity, new credential paths, expanded policy boundaries, and increased audit requirements. Without a solid security foundation, scaling agentic AI will amplify operational complexity rather than reduce it.

Critical risks within agentic AI

Four risk patterns dominate AI workflows across the industry:

Overprivilege without visibility

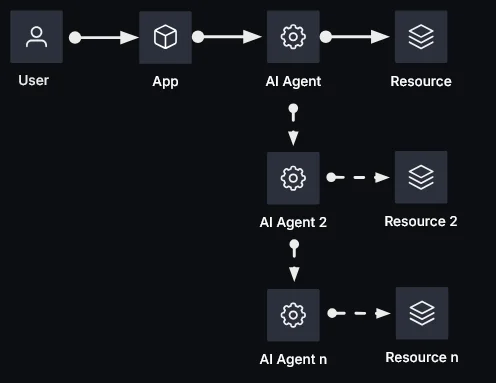

Agents accumulate excessive access. A typical workflow follows this pattern:

- A human invokes an application

- The application invokes an AI agent, which may invoke additional agents

- The agent accesses resources or performs tasks

Agent chains can extend through multiple layers, with different permission sets flowing through each link. Most organizations lack visibility into these chains. To accommodate all potential tasks, teams overprovision permissions, creating massive blast radius if an agent is compromised or manipulated.

Lack of real-time enforcement

Eventually, every agent calls a tool, queries a database, or modifies a system. That's the moment when policies must enforce guardrails. Many teams assume these controls exist or that another team handles them. In most cases, these checks are absent entirely, creating end-to-end security failures.

Impersonation and invisible delegation

Organizations commonly allow agents to act using the identity of the human who invoked them. This approach is convenient but breaks audit trails and obscures delegation. Explicit delegation with user consent provides a better model: the user authorizes the agent, and the system records that authorization. Security teams can then distinguish between user actions and agent actions.

Zero accountability

Without unique agent identities, runtime policy checks, or detailed logging, fundamental security questions become unanswerable: Who approved this action? Which agent executed it? What authority did the agent use? These aren't optional questions for security teams, auditors, or regulators. They're baseline control requirements for compliant AI deployment.

Why immediate action is needed

The IBM 2025 Cost of a Data Breach Report puts the global average breach cost at $4.4 million. The report also shows that 97% of organizations experiencing AI-related security incidents lacked dedicated AI access controls, and 63% had no AI governance policies to manage or prevent shadow AI. With agent compromise now the fastest-growing attack vector, establishing agentic runtime security before deployment is urgent.

Regulatory pressure

SOC2, GDPR, and PCI DSS require demonstrable unique identities, audit trails, and rapid permission revocation. While most organizations plan to deploy AI agents within 12 to 18 months, only 21% report mature agent governance models. Deploying without proper governance guarantees control failures.

Operational sprawl

As organizations launch dozens or hundreds of agents, sprawl and privilege creep accelerate when teams work in silos and create their own AI policies. Tool and secret sprawl already burden platform, development, and security teams. Agent sprawl will compound the problem.

Agentic AI implementation best practices

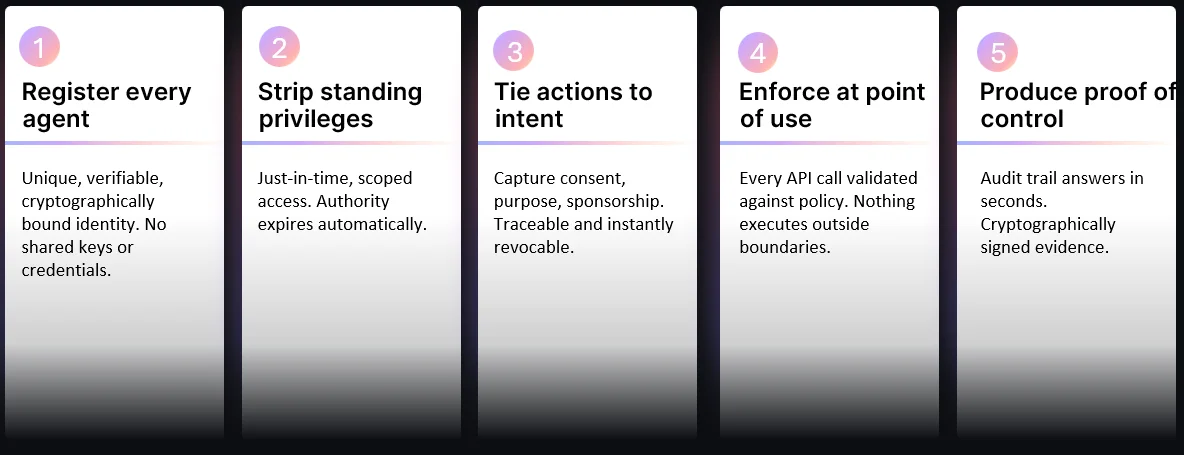

Five imperatives form the foundation of a secure agentic AI strategy:

Register every agent

Every agent needs a unique, verifiable, cryptographically bound identity. No shared keys, no service accounts, no hiding behind human principals. Establish identity through mTLS, SPIFFE, or cloud provider identities.

Strip standing privileges

Least privilege starts by revoking standing access. Just-in-time dynamic credentials with specific time-to-live limits, lasting only as long as required tasks, dramatically reduce blast radius when agents are compromised.

Tie actions to intent

When requests involve user-specific data or administrative actions, capture user context, consent, and delegation. Transform vague narratives like "agent X can do this" into precise statements: "agent X can do this for user Y, for purpose Z, during session B."

Enforcement at point of use

Verify every API call, query, and tool invocation against policies at runtime. Deny requests when agents lack authorization to access target systems or resources. This check must happen before action execution, not at login or deploy time.

Produce proof of control

Security teams need evidence, not assumptions. Audit trails must answer questions quickly and provide signed proof of control. Detect violations—like an agent accessing an unauthorized database—in near-real time. Maintain clear separation of responsibility: identity providers handle user authentication, SSO, and consent; secrets management systems handle workload identity, credential brokering, policy enforcement, and auditing.

Agentic AI use case examples

HashiCorp Vault provides identity-based controls to protect, inspect, connect, and manage secrets, machine identities, service identities, and data access credentials. Vault's policies grant fine-grained access to secrets, identities, PKI, and operations like encryption, decryption, and key signing. Vault also centralizes detailed logs, reporting, auditing, and compliance, making it an ideal foundation for agentic AI security. Three use cases demonstrate this:

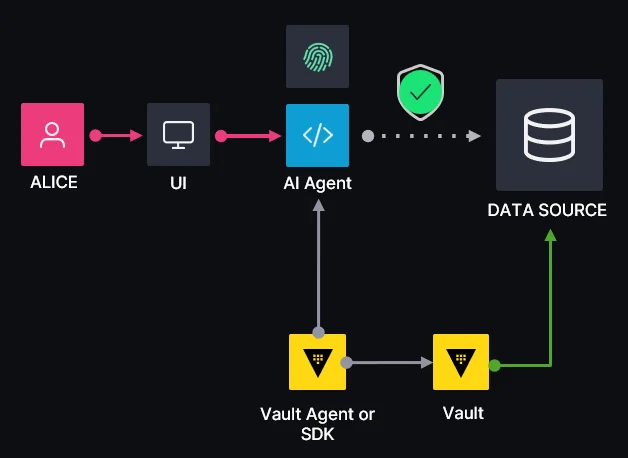

Use case #1: Read-only information retrieval agents

A user (Alice) interacts with a chatbot UI, asking questions like "How do I reset my password?" or "What are your business hours?" An AI agent behind the UI interacts with Vault to retrieve dynamic, just-in-time credentials for accessing the downstream data source containing answers.

This use case requires no user context or consent. Vault creates JIT credentials in the data source with an explicit token TTL and can automatically renew tokens before expiration if needed.

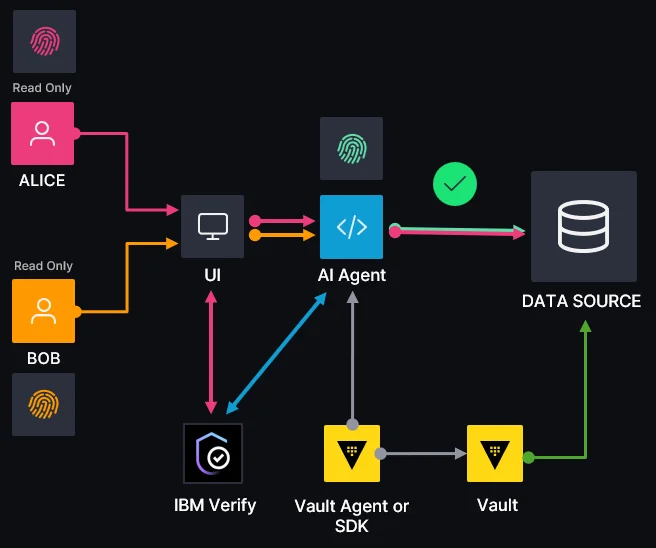

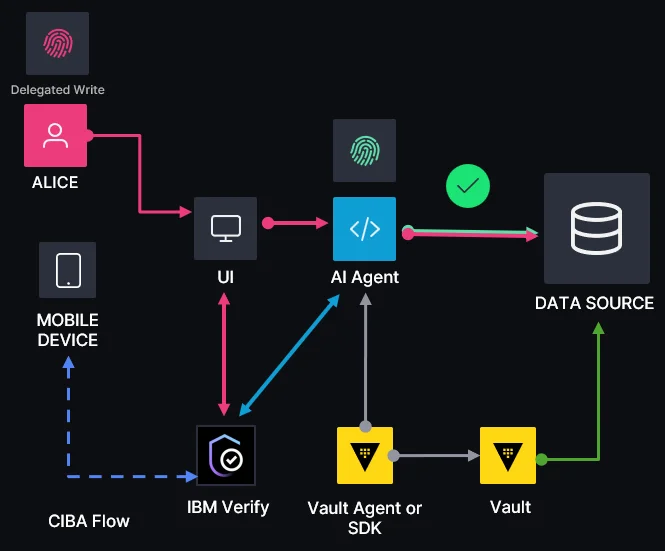

Use case #2: Personalized information retrieval agents

The support chatbot now queries customer-specific data, account information, and personalized recommendations. Since user context and consent are required, an OAuth 2.0 authorization flow with user consent using IBM Verify as an IdP is introduced. IBM Verify (or any IdP) returns a JWT token containing user context, session ID, and delegation claims.

As in the previous use case, Vault handles creation of JIT dynamic credentials in required data sources for user content access.

Use case #3: Personalized and privileged agents

This use case introduces elevated privileges, enabling the agent to perform banking operations, agentic shopping, document authoring, or HR functions like employee onboarding and offboarding. In addition to user context and consent, delegation is required through an OAuth 2.0 Client-Initiated Backchannel Authentication (CIBA) authorization flow with user context from IBM Verify.

The user (Alice) receives a mobile notification whenever the AI agent attempts an elevated operation on their behalf. This ensures proof of control, full auditability, and clear separation of responsibility throughout the entire operation flow.

Conclusion

Establishing consistent runtime security patterns during early agentic AI adoption is critical. Without solid foundations and standards, individual teams will implement siloed approaches to agent identity, access, and policy enforcement, resulting in fragmentation, inconsistent controls, and increased risk.

Defining these patterns upfront provides the standardization that enables teams to build and scale agentic AI workflows securely, without reinventing security controls for each new use case.

To learn more about how HashiCorp Vault provides the controls essential for safe, scalable agentic AI adoption, check out the Agentic Runtime Security Explained video on the IBM Technology YouTube page, or contact our team for a tailored consultation.